Creating a custom environment in OpenAI Gym - Blocking Maze

This short post introduces how to create your own OpenAI Gym environment.

The problem

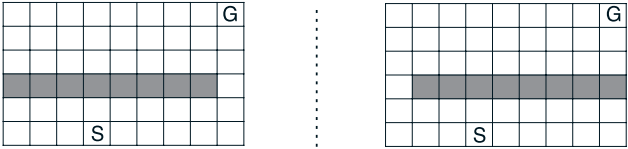

The environment I will implement is “Blocking Maze” from Sutton’s RL book [1] (Chapter 8):

The environment is a simple 6×9 grid world with a wall (the shaded area). The agent needs to find the path along the right side to reach the goal (left image). After some number of steps, the wall position changes and the agent must find a new optimal path (right image).

Image credit: [1]

Code

There is an official document here: How to create new environments for Gym. This explains everything you will need.

According to the document, the required file structure looks like this:

gym-foo/

README.md

setup.py

gym_foo/

__init__.py

envs/

__init__.py

foo_env.py

foo_extrahard_env.py

Let’s build it.

1. Top level directory

Create a project directory and a README.md file first (the README can be blank at this point):

mkdir blocking_maze

cd blocking-maze

touch README.md

2. setup.py

from setuptools import setup

setup(name='blocking_maze',

version='0.0.1',

install_requires=['gym']

)

3. Create other directories inside

mkdir blocking_maze

cd blocking_maze

mkdir envs

4. blocking-maze/blocking_maze/__init__.py

from gym.envs.registration import register

register(

id='blocking-maze-v01',

entry_point='blocking_maze.envs:MazeEnv1',

)

5. blocking-maze/blocking_maze/envs/__init__.py

from blocking_maze.envs.blocking_maze_env01 import MazeEnv1

6. blocking-maze/blocking_maze/envs/blocking_maze_env01.py

Start with a template; you can copy the snippet from the official document:

import gym

from gym import error, spaces, utils

from gym.utils import seeding

class FooEnv(gym.Env):

metadata = {'render.modes': ['human']}

def __init__(self):

print("init")

def step(self, action):

pass

def reset(self):

pass

def render(self, mode='human'):

pass

def close(self):

pass

You will need to implement the step, reset, and render functions; otherwise you will encounter a NotImplementedError: gym/gym/core.py

The code does not do anything yet (the full implementation comes later), but let’s verify the installation first.

Install

From the parent directory (the one containing blocking-maze/), run:

pip install -e blocking-maze

Then verify it works:

$ python

>>> import gym

>>> gym.make('blocking_maze:blocking-maze-v01')

init

<blocking_maze.envs.blocking_maze_env01.MazeEnv1 object at 0x1052b9cf8>

>>>

Looks like it’s working!

Defining the details

Now that the environment is installed, let’s implement the actual logic.

The implementation is fairly straightforward. Please refer to this gist for the full code: blocking_maze_env01.py

The main things to implement are:

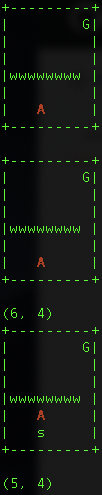

- Define your map (I used

'w'to represent walls and whitespace for walkable tiles) - Implement all required functions:

step,reset, andrender, along with some helper functions

I also defined action_space and observation_space as follows (though this is not strictly required for the code to run):

self.action_space = spaces.Discrete(len(self.actions)

self.observation_space = spaces.Discrete(46)

Once the implementation is complete, test it like this:

import random

import gym

env = gym.make('blocking_maze:blocking-maze-v01')

env.render()

done = False

while not done:

action = random.randrange(4)

observation, reward, done, info = env.step(action)

env.render()

print(env.a_loc)

It works!

I also implemented a switch_maze function that switches the map layout from configuration 1 to configuration 2. You can use this to replicate the experiment from the book (e.g., changing the environment after 1,000 timesteps). Enjoy!