Back-to-Basics: Dropout paper notes

I noticed that I have never read the original dropout paper even though it is a technique I have used for a long time.

Dropout: A Simple Way to Prevent Neural Networks from Overfitting

This technique has long been known for its effectiveness in preventing neural networks from overfitting.

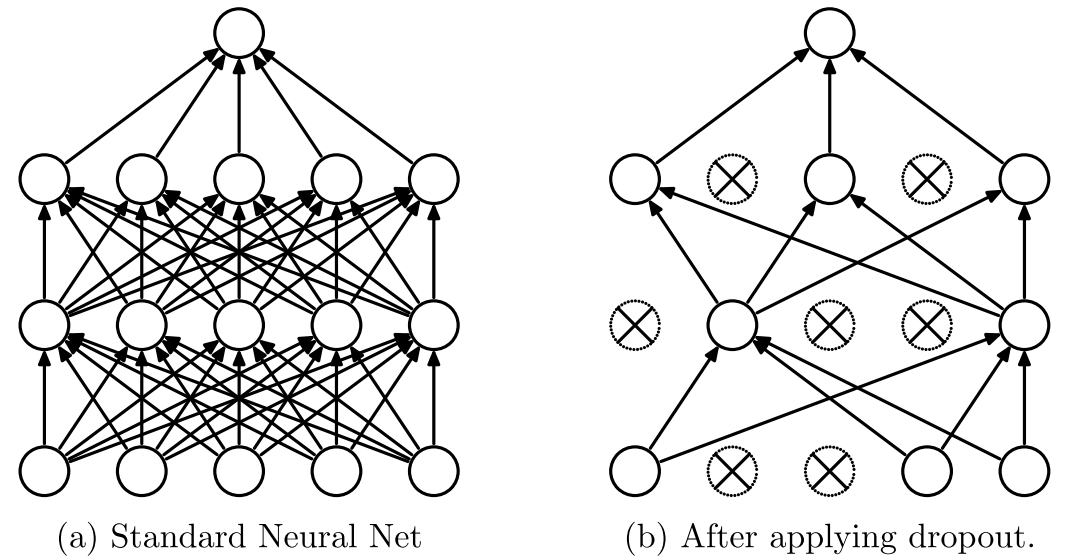

The key idea is simply to drop some network connections randomly during training. This simple idea “prevents units from co-adapting too much” (quoted from the paper), which improves the generalizability of networks and, in turn, improves test performance.

The authors showed that dropout improved network performance and achieved state-of-the-art results on SVHN, ImageNet, CIFAR-100 and MNIST.

“The central idea of dropout is to take a large model that overfits easily and repeatedly sample and train smaller sub-models from it” is reminiscent of ensembling. It is like training multiple models, and the use of sub-models derived from a single network is what makes dropout unique. While ensembling networks with different architectures can perform better if you do not mind the extra work and computational cost, dropout provides an easier way to capture the benefits of ensembling.

The cost is training time. The authors reported that it takes 2–3 times longer to train a network with dropout.